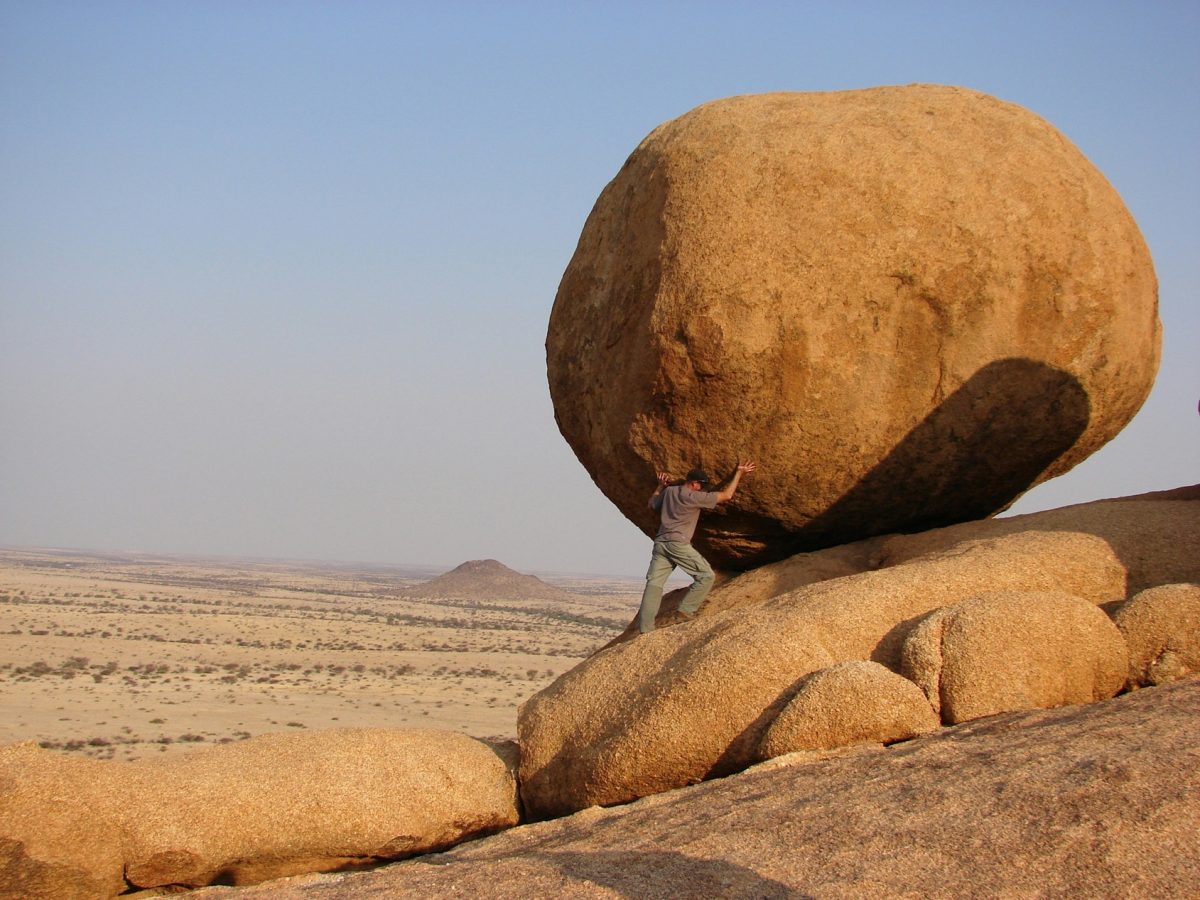

The typical ad campaign may do far less for a brand than many studies would have you believe.

According to a study just published in the International Journal of Research in Marketing, meta-analyses that use marketing mix models (MMM) to calculate the effects of campaigns have tended to exaggerate the benefits of advertising by at least a factor of 5.7.

The authors of the paper used new statistical techniques to eradicate biases from the data sets of already published meta-analyses. When they did so, they found that reported advertising elasticities fell from between 0.09 and 0.47 to between 0.003 and 0.1.

These figures mean that if a brand spends 1% more on advertising, it can typically expect sales, consumer choice or market share to increase between 0.003% and 0.1%.

While these updated elasticities are dramatically lower than what was previously reported by the MMM studies, the authors note that they are generally in line with the results of studies that use randomised control trials — an experimental set up, with one control group and one intervention group — to estimate the effects of advertising.

Joseph Korkames, the lead author of the new paper, argues that existing MMM meta-analyses tended to exaggerate the effects of advertising because they failed to properly account for misspecification, aggregation, or publication selection bias.

Publication selection bias — ignoring campaigns or data that demonstrate little to no effect on consumer behaviour — is believed to be the biggest corrupter of results. On average, writes Korkames, it exaggerates advertising elasticities by at least 0.08.

But Korkames is careful to state that it would be ‘erroneous’ to infer from his paper that ‘advertising has no effect’. For a start, it’s hard to know from the paper how much of the data comes from mature companies that have already optimised their marketing to an extent that any subsequent increases in ad spend could only be expected to yield small improvements.

And most of the paper’s estimates are based on small changes to a brand’s advertising, writes Korkames, not dramatic shifts. Doing something more extreme — like radically shifting media spend or introducing new creative — could have a much bigger impact.

And even when applying the new statistical methods to the old data sets, the researchers still found some instances when advertising typically had an outsized impact on commercial outcomes.

‘The positive coefficients on TV and Internet show that these media are more effective, with internet-based advertising being most effective,’ writes Korkames.

Advertising was also shown to be especially effective for products and services that are still in their growth phase, and for entertainment products.

Meta-analysis of advertising effectiveness: New insights from improved bias corrections was written by Joseph Korkames, TD Stanley, Stefan Stremersch, and drew on 93 existing research papers to inform its analysis.